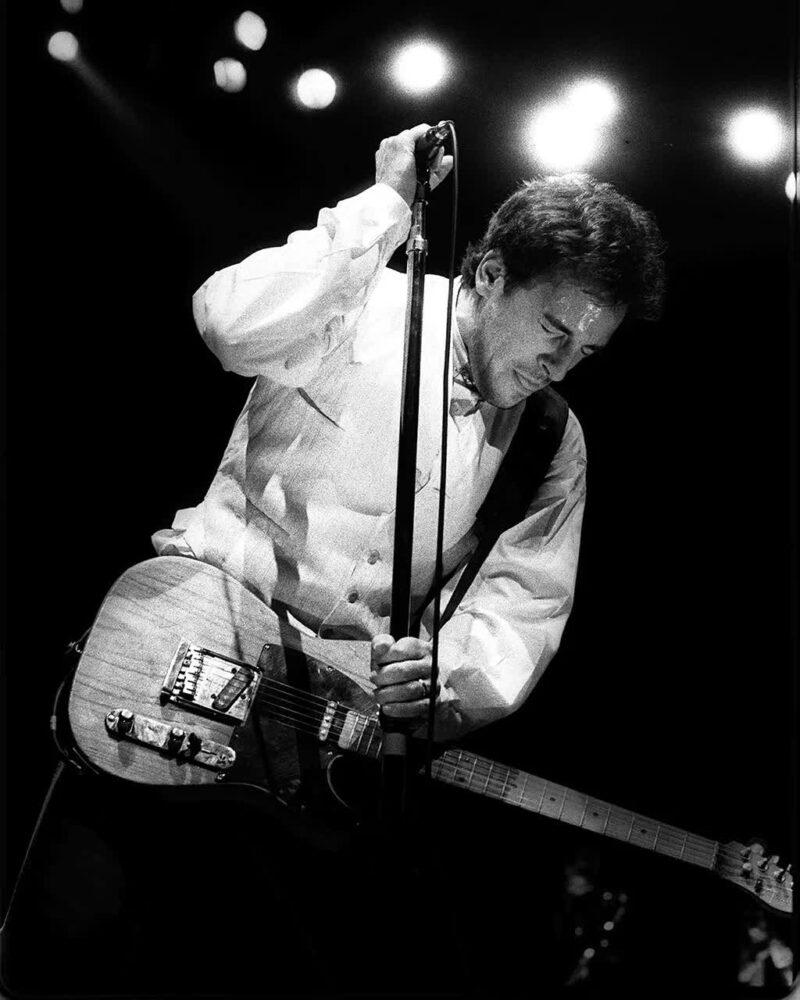

Bruce Springsteen’s brief struggle to recall the opening lyrics of Born to Run can reveal a lot about how our memories work (Credit: Stan Grossfeld/The Boston Globe/Getty Images)

https://www.bbc.com-By Sanjay Sarma and Luke Yoquinto

We’ve all had those frustrating moments when we struggle to recall someone’s name, or a key bit of information stays for too long on the tip of your tongue. It turns out these momentary lapses may actually be good for your memory.

On 25 February 1988, at a performance in Worcester, Massachusetts, Bruce Springsteen forgot the opening lines to his all-time greatest hit, Born to Run.

According to the conventional wisdom about the nature of forgetting, set down in the decades straddling the turn of 20th Century, this simply should not have happened. Forgetting seems like the inevitable consequence of entropy: where memory formation represents a sort of order in our brains that inevitably turns to disorder. Given enough time, cliffs crumble into the sea, new cars fall to pieces, blue jeans fade. As Springsteen put it in his song Atlantic City: “Everything dies, baby, that’s a fact.” Why should the information in our minds be any different?

Comment and Analysis

Sanjay Sarma is the Fred Fort Flowers and Daniel Fort Flowers Professor of Mechanical Engineering and head of Open Learning at MIT and Luke Yoquinto is a science writer and a research associate at the MIT AgeLab. They are co-authors of Grasp: The Science Transforming How We Learn (2020, Doubleday/Little, Brown).

In such a model, the preservation of information like song lyrics requires constant upkeep – which, in the case of Born to Run, no one could accuse Springsteen of neglecting. By 1988, he had certainly repeated the lyrics to his 1975 hit thousands of times over. And so when he found himself staring out at the expectant Worcester audience at a loss, there was little he could do but confess into the microphone: “Sung it so damn much I forgot what the words were.”

According to the entropic model of forgetting, such a slip-up made little sense. And if that model were wrong (Springsteen hardly being alone in suffering the caprices of a forgetful brain) the import would be of enormous consequence. Schools and education systems around the world had been built based on the best psychological theories of the early 20th Century. If these models of learning – and its supposed opposite number, forgetting – were wrong, who could tell how many learners had been done a disservice? And even outside of school, how many of us would have wasted countless hours of thoughtless repetition – of golf swings, say, or French verbs, or wedding remarks – in a diligent but vain effort?

Efforts to explain forgetting date back to the late 1800s, when psychological researchers began – slowly, at first – to incorporate mathematical tools into their experiments. The German psychologist Hermann Ebbinghaus studied his own powers of recall by memorising long series of nonsense syllables, then recording how well he remembered them as time elapsed. His ability to summon up this meaningless information, he discovered, sloped downward over time in a curved distribution: he lost most of his hard-won syllables quickly, but a small percentage of them persisted in his memory long after his initial memorisation efforts.

These results seemed to support the intuitive idea that forgetting was the result of the simple erosion of information. But even in these early efforts, wrinkles appeared in the data suggesting that there might be more to forgetting than met the eye. Importantly, the timing of Ebbinghaus’s rehearsals wielded enormous influence over how well he remembered items, with a spaced-out practice schedule outperforming rehearsal sessions that were bunched together.

This finding was mysterious, hinting at some unexplained requirements of the memorising mind, but at the same time it was unsurprising. Indeed, the benefits of spacing out one’s studies were known to most students. “The schoolboy,” he reasoned, “doesn’t force himself to learn his vocabularies and rules altogether at night, but knows that he must impress them again in the morning.”

How to think about X

This series will change the way you look at the world. Whether it’s the concept of “time”, “consumerism”, or even “creativity”, many of us tend to think about – and define – certain ideas in the ways we’ve been taught. But how did our conceptualisation of these big ideas evolve? How to Think About X searches for new ideas about our lives, the concepts that govern them and our future.

In Ebbinghaus’s time these sorts of quantitative methods were the exception in psychological research, but a generation later, they were rapidly gaining adherents. Perhaps no psychologist was more responsible for this change than Columbia University’s number-loving psychologist Edward L. Thorndike, who argued that: “If a thing exists, it exists in some amount; and if it exists in some amount, it can be measured.”

Thorndike’s influence on both research psychology and educational practice is almost impossible to overstate. He was an incredibly prolific author of articles and books – including arithmetic books and a line of student dictionaries that bore his name into the new millennium – as well as early standardised tests. He served as president first of the American Psychological Association, and later of the American Association for the Advancement of Science. Perhaps most important, his research laid the groundwork for the influential mid-century movement in psychology known as Behaviorism, which attempts to explain behaviours purely as a function of environmental conditioning, not any intervening mental processes.

Thorndike’s early research concerned animal learning and frequently featured cats, which he often tasked with escaping from elaborate cages. From his observations he produced three basic laws of learning for human and non-human animals alike. These concerned how the brain “stamps in” associations (which he dubbed his Law of Effect); under what conditions learning occurs (his Law of Readiness); and how memories are maintained or forgotten: his Law of Exercise, which breaks down into sub-theories of use and disuse.

The theory of disuse was simple: If you don’t use a memory, you lose the memory. (Use, meanwhile, could preserve it, albeit only when accompanied by some kind of satisfying reward – the audible appreciation of an adoring crowd, for instance.)

Thorndike’s theory of forgetting largely aligned with Ebbinghaus’s observations, except it didn’t account for the still-mysterious fact that spaced rehearsal of information seemed to steel-plate information against forgetfulness. It would take decades for cognitive scientists to come up with a model of forgetting that satisfactorily accounted for this issue.

In the meantime, however, Thorndike’s trio of learning laws bolstered early-20th Century efforts to standardise education.

Forgetting, it seemed, was less like a cliff slowly collapsing into the sea, and more like a house deep in the woods that becomes harder and harder to find

To be clear, Thorndike was in no way single-handedly responsible for the regularised forms education took around the world in the 20th Century. However, his ideas about learning – that it was quantifiable and that some students were innately better at it than others – supported visions of school where rigidly standardised conditions prevailed, in terms not only of standardised tests but also time spent in seats, classroom sizes and shapes, pedagogy, and metrics of student evaluation. Such interchangeable conditions allowed students to be compared to one another for supposedly meritocratic purposes.

In both the standardisation of education and the ongoing research into learning, forgetting became something of a sideshow. Its status began to improve, however, thanks to two separate research traditions begun in the 1960s and 1970s. One operates at the level of neurons and is detectable through tiny electrodes implanted in cells, while the other operates at the level of cognitive psychology and is detectable through cleverly designed quizzes.

At the cellular level, Eric Kandel, in a Nobel-winning series of studies, demonstrated that memories are preserved in the form of strengthened connections between neurons. Training regimes, he showed, whether conducted on intact, living, learning animals, or by electrically prodding neurons in a dish, create such beefed-up connections. And, as Ebbinghaus first observed, training (or rehearsal, or study) with extra time scheduled in between led these connections to be longer-lasting. This is a fact that holds true throughout the animal kingdom, from sea slugs to mammals.

The cellular machinery responsible for maintaining memories, then, may be prejudiced in favour of preserving information that we animals encounter repeatedly.

But what exactly is happening in the gaps in those spaced-out training, practice, or study regimens? At the cellular level, part of the answer may be that some of the mechanisms involved in preserving memories seem to require downtime: recharging periods, in effect, before neurons can get back to the work of strengthening their connections.

A different, yet perhaps complementary, answer is forthcoming in the research tradition of cognitive psychology. Here, a variety of studies suggest that gaps in one’s rehearsal or study schedule are so helpful because, counterintuitively, they create the opportunity for a salutary bit of forgetting.

To understanding how forgetting can be useful, it’s important to first recognise that a memory is never simply strong or weak. Rather, the ease with which you can summon up a memory (its retrieval strength) is different from how fully represented it is in your mind (its storage strength). The name of your parent, for instance, would be one example of a memory with both high storage and retrieval strength. A phone number you held in your head only momentarily a decade ago could be said to have low storage and retrieval strength. The name of someone you met a party mere minutes ago might have high retrieval but low storage strength. And finally, the lyrics to a song you’ve sung thousands of times but which stubbornly elude you, as you gaze out from the stage of the Worcester Centrum, would have high storage but distressingly low retrieval strength. Given the right cue, however – if your audience were to feed you the opening lines, for instance – the retrieval strength would snap right back.

Psychologists became aware of the distinction between storage and retrieval as early as the 1930s, when John Alexander McGeoch, a psychologist at the University of Missouri, tasked study subjects with memorising pairs of unrelated words. For example, every time I say pencil, for instance, you say chessboard. That task became far more difficult, he discovered, when, before asking his subjects to recite what they’d memorised, he confronted them with decoy pairs: pencil and cheese, pencil and table. The decoy pairs, it seemed, competed with the true pair for the memoriser’s attention.

As this line of research gained traction, the metaphor for forgetting changed. Forgetting, it seemed, was less like a cliff slowly collapsing into the sea, and more like a house deep in the woods that becomes harder and harder to find. The house might be perfectly sound – that is, its storage strength remains high – but if the path leading to it becomes surrounded by equally plausible paths leading the wrong way, one’s formerly clear mental map can transform into a maze.

By carrying out a challenging retrieval, you can increase a given memory’s storage strength and also increase your chances of retrieving it in the future

In Springsteen’s case, it’s easy to see how his mental wayfinding might have gotten thrown off track. “The reason for the muff, apparently was that he was concentrating so much on the spoken introduction, telling the audience how the song has assumed a new meaning to him over the years,” the Los Angeles Times’ music critic wrote several days after the event. The new introduction meant he was approaching the same old memory from a different set of cues: a different starting point. Suddenly, the once-reliable path to the opening lines of the song was surrounded by false starts.

But soon, the lyrics came roaring back. And assuming this time the memory’s heightened accessibility stuck around, it would have been in keeping with then-cutting-edge research around retrieval and storage strength – measures which, though distinct from one another, turned out not to be independent.

In a landmark 1992 paper on “The New Theory of Disuse” – a title in direct conversation with Thorndike – the University of California, Los Angeles (UCLA) cognitive psychologists Robert and Elizabeth Bjork described a fascinating level of interplay between storage and retrieval. The retrieval of a memory adds to its storage strength, they showed, but with diminishing returns.

You might meet someone at a party and repeat her name to yourself in an attempt to add to the memory’s storage strength, but repetition will only take you so far: the sixth repetition won’t add much more heft than the fifth. What will add to its storage strength, however, is what the Bjorks call the “effortful retrieval” of that memory. Once the name is semi-forgotten, then, “at some time later, looking across the room and retrieving what that person’s name is – that can be a really powerful event in terms of your ability to recall that name later that evening or the next day”, Robert Bjork told us for our book Grasp: The Science Transforming How We Learn. By carrying out a challenging retrieval, you can increase a given memory’s storage strength and also increase your chances of retrieving it in the future.

In this party example, it is the time gap between when you meet your new acquaintance and, later, when you find yourself in need of her name, that makes for forgetfulness. In a series of earlier experiments starting in the 1970s, however, Robert Bjork found other ways to disorient his research subjects on their paths to a desired memory. For instance, introducing confusing or irrelevant inputs, à la McGeoch, or changing their sensory cues – sights, sounds, and smells that might trigger a memory – by asking them to recall information in new surroundings. Regardless of how forgetfulness came about, when overcome it led to stronger, longer-lasting memories.

Today, well-timed forgetting is part of a larger suite of educational approaches that the Bjorks have termed “desirable difficulties“: strategies that may initially annoy students, but which eventually yield dividends. The sort of forgetting that ultimately leads to stronger, more accessible memories can be produced by spacing out one’s study schedule, for instance, and also by interleaving one subject’s study sessions with another’s. Setting material aside, then revisiting it, can also do away with a student’s false sense of command, since memories with momentarily high retrieval strength may prove far less accessible a few days later.

In the years following the publication of the New Theory of Disuse, the Bjorks worked to spread the word about forgetting and other desirable difficulties – work necessitated by the simple fact that school is typically not set up to facilitate laudable acts of forgetting. Far from it: as a number of research papers have shown, on exam day, students who cram for exams actually outperform their counterparts who space out their studies. Only weeks and months later do the advantages of a spaced schedule win out, with the “spacers” substantially outperforming crammers. But by then, the exam is over.

Standardised structures of educational timing and assessment, many of which were established when Thorndike’s theories of learning were still new, are to this day disincentivising what we now know to be superior learning practices.

That shouldn’t stop learners of all ages – including adults in the working world – from making the most of our vast ability to not only take in new information, but to access it at the precise moment we need it. Even knowledge we might consider lost to the sands of time may still be hiding in our brains, waiting for the right cue to resurface. As Springsteen reminds us in “Atlantic City”, while everything does die, in the next line he continues: “Maybe everything that dies someday comes back.”

* Sanjay Sarma is professor of mechanical engineering and head of Open Learning at MIT (@mitopenlearning). Luke Yoquinto is a science writer and a research associate at the MIT AgeLab (@[email protected] ).

—